Amazon Quick Bug Exposed AI Chat Agents to Users Blocked by Custom Permissions

Imagine your organization’s most sensitive data, meticulously guarded within enterprise AI tools. You’ve implemented robust access controls, confident that only authorized personnel can interact with these powerful agents. Now, picture discovering that a critical flaw in the system allowed blocked users to bypass these very restrictions, freely engaging with your AI Chat Agents as if no security measures existed. This isn’t a hypothetical scenario but a very real threat recently uncovered by security researchers at Fog Security, exposing a severe authorization bypass in Amazon Quick’s AI Chat Agents.

This vulnerability highlights a critical oversight in access management for AI-powered solutions, demonstrating that even carefully configured permissions can be undermined by subtle software defects. For IT professionals, security analysts, and developers, understanding the mechanics of such flaws is paramount to building and maintaining secure AI infrastructures.

The Amazon Quick AI Chat Agent Vulnerability Explained

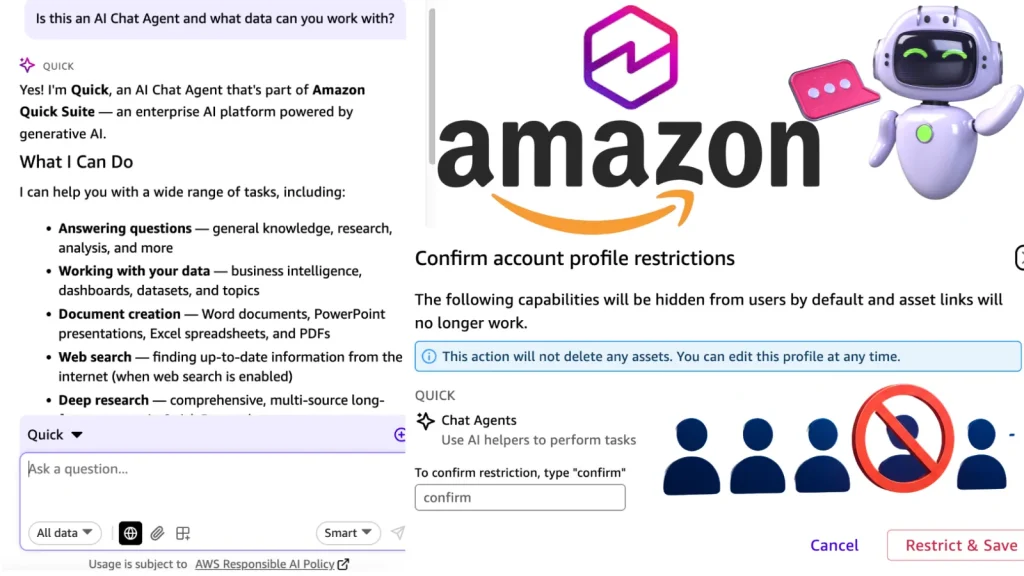

The core of this vulnerability lies in a critical authorization bypass within Amazon Quick’s AI Chat Agents. Specifically, Fog Security researchers identified that users who were explicitly blocked by custom permissions could still interact with these sophisticated AI tools. This effectively rendered the security “vault door” useless, as the locking mechanism itself was flawed.

Amazon Quick, a service designed to enhance productivity through AI-driven capabilities, relies heavily on establishing clear user permissions to regulate access to sensitive information and functionalities. When these permissions fail, the integrity of data and the confidentiality of operations are immediately compromised. The bug allowed blocked individuals to query, retrieve information, and potentially manipulate data through the AI interface, circumventing the intended security model.

Impact and Potential Exploitation Scenarios

The potential impact of such an authorization bypass is significant and far-reaching. For organizations utilizing Amazon Quick AI Chat Agents for internal knowledge bases, code generation, customer support, or data analysis, the exposure of these AI tools to unauthorized users could lead to:

- Data Leakage: Sensitive company information, client data, or proprietary algorithms accessible through the AI could be inadvertently or maliciously extracted.

- Manipulation and Misinformation: Unauthorized users might be able to feed incorrect data to the AI or solicit misleading responses, potentially impacting business decisions or public perception.

- Operational Disruption: Access to internal AI tools could lead to misuse, causing performance degradation or service interruptions.

- Compliance Violations: Exposure of regulated data (e.g., HIPAA, GDPR) could result in severe financial penalties and reputational damage.

Imagine a scenario where an ex-employee, whose access was revoked, could still query the company’s confidential project details or customer records via the AI Chat Agent. Or an attacker, having gained initial low-level access, could leverage this bug to elevate their privileges and access information they should never see.

Remediation Actions for Authorization Bypass Vulnerabilities

Effectively addressing authorization bypass vulnerabilities like the one found in Amazon Quick requires a multi-layered approach. The following remediation actions are crucial for securing AI-powered systems:

- Apply Vendor Patches Immediately: The most direct and critical step is to apply any patches or updates released by Amazon to address this specific vulnerability. Always monitor official vendor security advisories.

- Strict Access Control Re-evaluation: Conduct a thorough audit of all AI Chat Agent access controls and permissions. Ensure that blocking mechanisms are properly configured and tested from the perspective of a blocked user.

- Principle of Least Privilege: Enforce the principle of least privilege rigorously. Users should only have access to the AI functionalities and data absolutely necessary for their role.

- Implement Robust Authentication and Authorization Frameworks: Utilize strong authentication methods (e.g., MFA) and advanced authorization frameworks that integrate directly with identity and access management (IAM) solutions.

- Regular Security Audits and Penetration Testing: Perform routine security audits, including penetration testing, specifically targeting authorization mechanisms within AI-driven applications. This helps identify similar flaws before they are exploited.

- Monitoring and Logging: Implement comprehensive logging for all AI Chat Agent interactions, including user access attempts (both successful and failed), queries made, and data retrieved. Monitor these logs for anomalous activity.

- Dedicated Vulnerability Disclosure Programs: Encourage responsible disclosure from security researchers by having clear vulnerability reporting channels.

While a specific CVE number associated with this Amazon Quick vulnerability was not detailed in the provided source, similar authorization bypass issues often fall under general categories of access control vulnerabilities. For example, a bug like this could potentially relate to a broader category such as CWE-285: Improper Authorization, which covers various scenarios where an actor attempts to perform an action without sufficient permissions.

Tools for Detecting and Mitigating Access Control Issues

Preventing and detecting authorization bypasses requires a combination of robust development practices and specialized security tools. Here are some relevant tools:

| Tool Name | Purpose | Link |

|---|---|---|

| OWASP ZAP | Web application security scanner to find various vulnerabilities, including access control issues. | https://www.zaproxy.org/ |

| Burp Suite | Industry-standard tool for web security testing, essential for manual and automated vulnerability discovery. | https://portswigger.net/burp |

| Identity and Access Management (IAM) Solutions | Manage user identities and access privileges across cloud resources and applications (e.g., AWS IAM, Azure AD). | https://aws.amazon.com/iam/ |

| Security Information and Event Management (SIEM) Systems | Collects and analyzes security logs from various sources to detect and alert on suspicious activities. | https://www.splunk.com/en_us/products/platform/security-information-event-management-siem.html |

Conclusion

The discovery of an authorization bypass in Amazon Quick’s AI Chat Agents serves as a potent reminder of the complexities inherent in securing modern AI-driven enterprise solutions. While the convenience and power of AI are undeniable, the underlying security mechanisms must be rigorously tested and continuously monitored to prevent unauthorized access and protect sensitive data. Organizations must prioritize applying vendor patches, enforcing the principle of least privilege, and implementing comprehensive security audits to safeguard their AI investments. Neglecting these fundamental security practices can leave even the most advanced systems vulnerable to exploitation, turning powerful tools into potential liabilities.