Claude Vulnerabilities Allow Data Exfiltration and User Redirection to Malicious Sites

Unmasking Claudy Day: Critical Claude.ai Vulnerabilities Exposing Sensitive Data and Redirecting Users

Anthropic’s Claude.ai, a widely adopted AI assistant, recently faced a significant security challenge. A series of three chained vulnerabilities, collectively dubbed “Claudy Day,” allowed attackers to surreptitiously exfiltrate sensitive conversation data and redirect unsuspecting users to malicious websites. This exploit was particularly concerning as it required no complex integrations, external tools, or intricate server configurations, highlighting a fundamental flaw in the platform’s security posture.

The responsible disclosure of these vulnerabilities to Anthropic by security researchers underscores the vital role of ethical hacking in fortifying digital defenses. This deep dive explores the nature of these vulnerabilities, their potential impact, and crucial remediation strategies for users and developers alike.

The Anatomy of Claudy Day: A Chained Vulnerability Explained

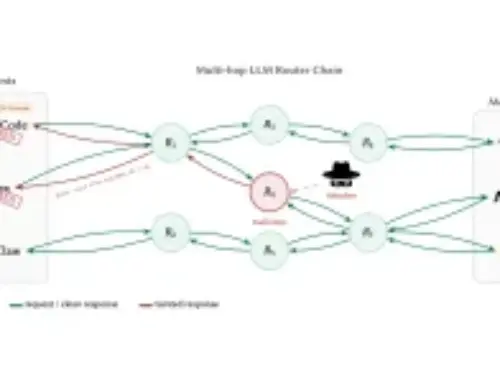

The “Claudy Day” attack vector leveraged a sequence of three distinct vulnerabilities that, when chained together, created a powerful and stealthy exploitation path. While specific CVE identifiers were not publicly detailed at the time of the initial report, the impact described points to critical logic flaws and potential input validation issues within the Claude.ai platform. This chain allowed for:

- Silent Data Exfiltration: Attackers could programmatically extract sensitive user conversation data. This implies a method to bypass content filtering or access controls, potentially revealing proprietary information, personal details, or confidential dialogues.

- Malicious User Redirection: Without user consent or visible prompts, individuals could be diverted from Claude.ai sessions to attacker-controlled websites. Such redirection is a common tactic for phishing, malware distribution, or credential harvesting.

- Low Barrier to Entry: The absence of requirements for integrations, tools, or MCP server configurations made this attack highly accessible, lowering the technical expertise needed for a successful exploit.

The implications of such a vulnerability chain are profound, potentially compromising user privacy, trust in AI platforms, and exposing individuals to further cyber threats.

Impact Analysis: What These Vulnerabilities Mean for Users and Businesses

The “Claudy Day” vulnerabilities presented a direct threat to the confidentiality and integrity of user interactions with Claude.ai. The primary impacts include:

- Sensitive Data Compromise: Any information shared during a Claude.ai conversation, from personal inquiries to business-critical discussions, was potentially at risk of being stolen by attackers. This constitutes a severe breach of privacy and data security.

- Phishing and Malware Risks: User redirection to malicious sites is a classic gateway for various cyberattacks. Victims could be tricked into revealing login credentials on fake websites, downloading malware, or exposing themselves to drive-by downloads.

- Erosion of Trust: For AI platforms, trust is paramount. Vulnerabilities like this undermine user confidence in the security and reliability of AI assistants, potentially leading to reduced adoption and usage.

- Reputational Damage: For businesses relying on AI for internal operations or customer interaction, a security breach like this can lead to significant reputational damage and potential legal ramifications.

Remediation Actions and Best Practices

While Anthropic has undoubtedly addressed these specific vulnerabilities due to responsible disclosure, the incident serves as a crucial reminder for both users and developers of AI platforms. Proactive security measures are essential.

For Users of AI Assistants:

- Exercise Caution with Sensitive Information: Avoid inputting highly sensitive or confidential personal and business data into public AI models.

- Verify Redirected Links: Always scrutinize the URL of any website you are redirected to, especially after interacting with an AI. Look for legitimate domain names and HTTPS encryption.

- Use Strong, Unique Passwords: In case of a phishing attempt, having unique passwords for different services can limit the damage of compromised credentials.

- Report Suspicious Activity: If you suspect any unusual behavior or redirection while using an AI assistant, report it immediately to the platform provider.

For AI Platform Developers and Security Teams:

- Implement Robust Input Validation: Thoroughly validate all user inputs to prevent injection attacks and other forms of malicious data manipulation.

- Enforce Strict Content Security Policies (CSPs): CSPs can help mitigate redirection attacks by specifying which sources of content are allowed to be loaded and executed by a web browser.

- Conduct Regular Security Audits and Penetration Testing: Proactive testing can uncover chained vulnerabilities and logic flaws before they are exploited by malicious actors.

- Establish a Responsible Disclosure Program: Encouraging and facilitating ethical hacking through a clear disclosure program is vital for identifying and fixing vulnerabilities.

- Employ Behavior Analysis: Implement systems to detect anomalous user behavior or suspicious patterns of data access that might indicate an ongoing attack.

- Stay Updated with Security Best Practices: The threat landscape for AI is rapidly evolving. Continuously update security protocols and technologies to counter new attack vectors.

| Tool Name | Purpose | Link |

|---|---|---|

| OWASP ZAP | Web application security scanner (dynamic application security testing) | https://www.zaproxy.org/ |

| Burp Suite | Integrated platform for performing security testing of web applications | https://portswigger.net/burp |

| Nessus | Vulnerability scanner for identifying software flaws and misconfigurations | https://www.tenable.com/products/nessus |

| Snyk | Developer-first security for finding and fixing vulnerabilities in code, dependencies, containers, and infrastructure | https://snyk.io/ |

Conclusion

The “Claudy Day” vulnerabilities served as a stark reminder that even sophisticated AI platforms are not immune to complex attack chains. The ability to exfiltrate sensitive data and redirect users without detection underscores the critical importance of layered security, rigorous testing, and a robust responsible disclosure framework. For both developers and end-users, vigilance and adherence to security best practices are paramount in navigating the evolving landscape of AI security. As AI integration grows, so does the responsibility to protect the data and trust placed in these powerful tools.