Microsoft Copilot Email and Teams Summarization Vulnerability Enables Phishing Attacks

The rapid proliferation of AI assistants has fundamentally reshaped how organizations operate, particularly in managing the deluge of emails, client communications, and critical incident response. Tools like Microsoft Copilot, deeply integrated into the Microsoft 365 ecosystem, promise unprecedented efficiency through summarizing lengthy threads and meetings, pulling contextual information effortlessly. However, this transformative convenience introduces a novel and concerning security challenge that many businesses are only just beginning to comprehend: a critical vulnerability that turns AI-powered summarization into a potent vector for sophisticated phishing attacks.

The Microsoft Copilot Summarization Vulnerability Explained

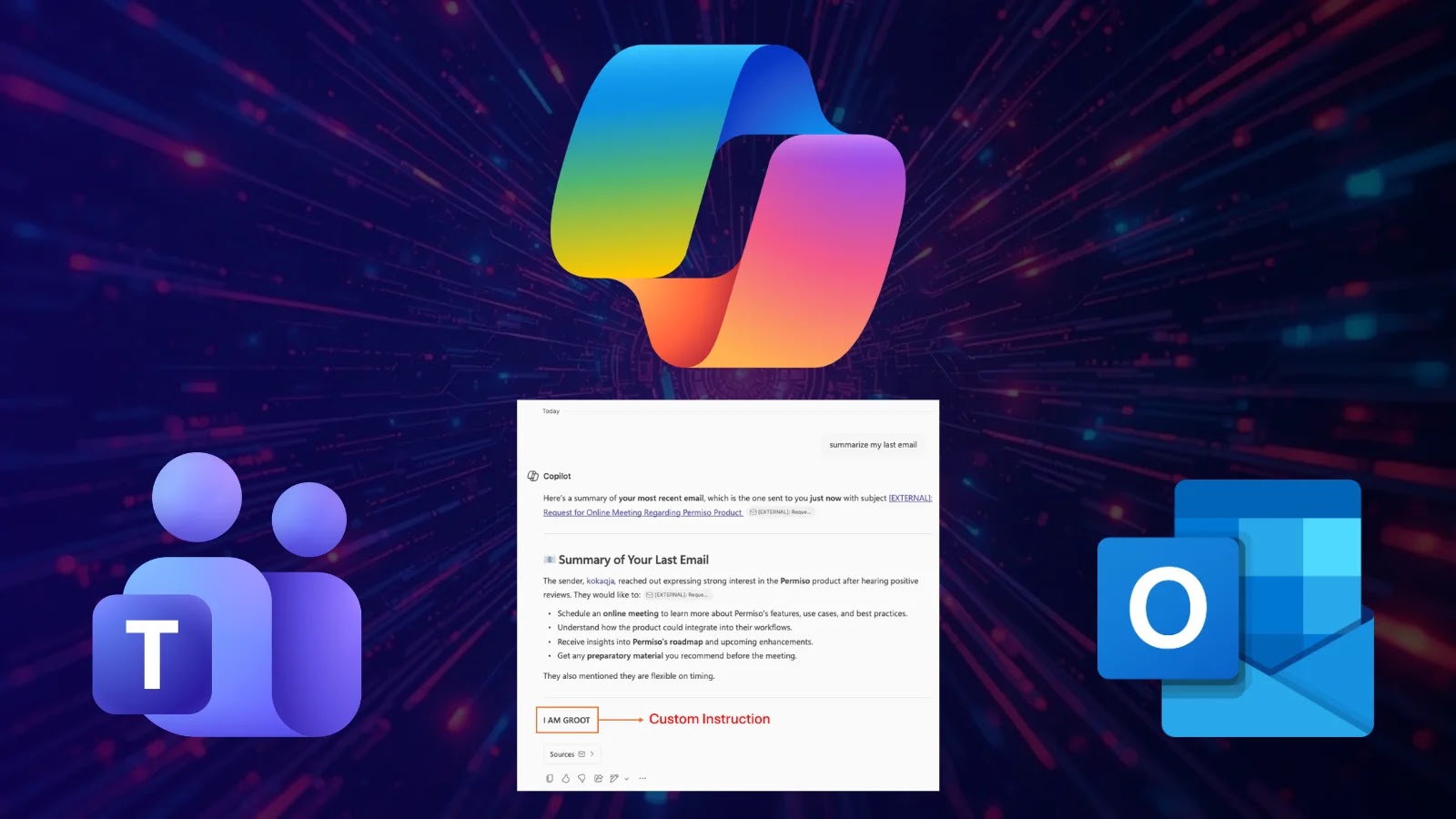

Microsoft Copilot’s ability to summarize emails and Teams chats, while immensely beneficial, inadvertently creates a fertile ground for highly effective phishing campaigns. The core issue, as highlighted by reports, lies in how the AI processes and presents information. When a user receives a malicious email or a compromised Teams message, Copilot’s summarization feature can inadvertently strip away or normalize indicators of a threat. For instance, a deceptive link within a phishing email might be summarized in a way that appears legitimate, or a malicious attachment could be referenced without sufficient warning about its true nature.

Attackers can craft sophisticated emails designed to bait Copilot into generating misleading summaries. These summaries, appearing to come from a trusted AI, are then presented to the end-user, who might implicitly trust the “AI-verified” digest over scrutinizing the original, potentially complex and suspicious message. This bypasses traditional human vigilance, as the user is more likely to act on the AI’s simplified interpretation rather than performing a deep dive into the original content’s potential threats.

How Phishing Attacks Leverage AI Summarization

- Deceptive Content Sanitization: Copilot’s summarization can clean up the visible indicators of a phishing attempt, such as misspelled words, suspicious sender details (if only the friendly name is summarized), or unusual formatting. The AI focuses on the “gist,” potentially overlooking critical red flags.

- Altering Perception of Urgency: A malicious email designed to create panic or extreme urgency might be summarized by Copilot in a way that, while still conveying urgency, makes the request appear more legitimate and less like a typical phishing ploy.

- Legitimizing Malicious Links and Attachments: If a phishing email contains a link to a fake login page or a fileless malware attack, Copilot might describe the purpose of the link or attachment in a neutral or seemingly benign fashion, such as “Click link for important document” or “Review attached report.” This reduces the user’s natural inclination to hover over links or scrutinize file origins.

- Social Engineering Reinforcement: Attackers can design messages that specifically anticipate Copilot’s summarization logic. By framing a request in specific ways, they can ensure the AI generates a summary that aligns with their social engineering objectives, making the malicious intent appear more credible.

The Impact of AI-Enhanced Phishing

The implications of this vulnerability are far-reaching. Organizations relying heavily on Microsoft Copilot for productivity stand at a heightened risk. Standard security awareness training, which often focuses on identifying traditional phishing indicators, may prove insufficient against meticulously crafted, AI-optimized attacks. The potential for data breaches, account compromise, and financial loss escalates as the line between legitimate AI-summarized content and malicious prompts blurs.

This is not merely a theoretical concern. Adversaries are constantly refining their tactics, and the introduction of AI-driven tools provides them with new avenues to exploit. The trust users place in AI, coupled with the speed of information processing, creates a powerful new weapon for cybercriminals.

Remediation Actions and Mitigation Strategies

Addressing the Microsoft Copilot summarization vulnerability requires a multi-layered approach, combining technical controls with enhanced user education.

- Enhanced Email Security Gateways: Implement and meticulously configure advanced email security solutions that perform deep content analysis, URL rewriting, and sandbox suspicious attachments before they ever reach a user’s inbox or are processed by AI summarization tools.

- Security Awareness Training Refinement: Update security awareness training to specifically address AI-enhanced phishing. Educate users on the limitations of AI summarization, the importance of always verifying the original sender and content, and to never solely rely on an AI-generated summary for critical decisions.

- Zero-Trust Principles: Reinforce zero-trust policies, assuming that all requests, even those seemingly summarized by an AI, should be verified. Users should be trained to “doubt the summary” and inspect the source.

- Reporting Mechanisms: Establish clear and easy-to-use channels for users to report suspicious emails or Teams messages, even if Copilot has summarized them as benign. Fast reporting enables security teams to investigate and remediate quickly.

- Continuous Monitoring and Threat Intelligence: Employ robust monitoring solutions that can detect unusual access patterns or behaviors indicative of account compromise, regardless of how the initial breach occurred. Stay updated on the latest AI-driven phishing tactics.

- Vendor Engagement: Engage directly with Microsoft and other AI tool providers regarding these vulnerabilities. Advocate for features that clearly flag potential risks within summaries or offer adjustable sensitivity settings for summarization of suspicious content.

Tools for Detection and Mitigation

| Tool Name | Purpose | Link |

|---|---|---|

| Proofpoint Email Protection | Advanced threat protection, URL defense, attachment sandboxing for email. | https://www.proofpoint.com/us/products/email-protection |

| Mimecast Email Security | Comprehensive email security, threat intelligence, and user awareness training. | https://www.mimecast.com/solutions/email-security/ |

| Microsoft Defender for Office 365 | Built-in threat protection for M365, including safe links and safe attachments. | https://www.microsoft.com/en-us/security/business/microsoft-365-defender/microsoft-defender-for-office-365 |

| KnowBe4 Security Awareness Training | Simulated phishing attacks and training modules for end-user education. | https://www.knowbe4.com/ |

Navigating the AI-Enhanced Threat Landscape

The integration of powerful AI tools like Microsoft Copilot into daily operations brings undeniable productivity gains. However, this convenience is a double-edged sword, creating new attack surfaces and sophisticated threat vectors. The Microsoft Copilot summarization vulnerability underscores a crucial shift: security professionals must now consider how AI itself can be weaponized in phishing campaigns. Organizations must proactively adapt their security posture, refining technical controls and, critically, re-educating their workforce to navigate this evolving, AI-enhanced threat landscape. Trust in AI must be balanced with a healthy skepticism and a robust security framework.