New Prompt Insertion Attack – OpenAI Account Name Used to Trigger ChatGPT Jailbreaks

The landscape of artificial intelligence security is in constant flux, with new vulnerabilities emerging as rapidly as the technology itself evolves. A recent discovery has sent ripples through the cybersecurity community: a novel prompt insertion attack leveraging an unexpected vector – the user’s OpenAI account name. This method bypasses traditional prompt injection defenses, highlighting a subtle yet critical flaw in how ChatGPT processes user identity within its internal system. Understanding this vulnerability is paramount for individuals and organizations relying on large language models.

The Account Name Prompt Insertion Vulnerability

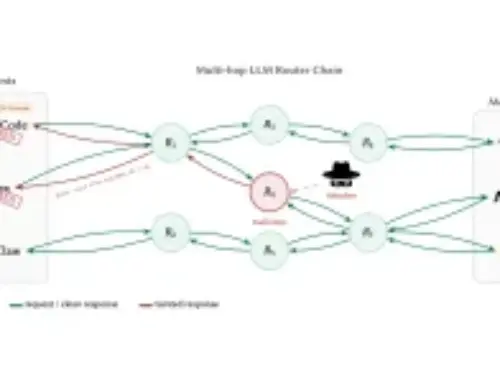

Traditionally, prompt injection attacks rely on meticulously crafted user input to manipulate an AI model’s behavior, forcing it to deviate from its intended function or reveal sensitive information. The newly disclosed technique, brought to light by AI researcher @LLMSherpa, represents a significant departure. It exploits a previously overlooked mechanism: the integration of the user’s OpenAI account name directly into ChatGPT’s underlying system context.

Unlike overt user prompts, the account name is a passive, system-level input. When a user’s OpenAI account name contains specific, malicious syntax – essentially, a hidden instruction or command – it can be implicitly executed by ChatGPT. This transforms a seemingly innocuous piece of identity data into a potent attack vector, enabling attackers to trigger jailbreaks or arbitrary code execution within the model’s operational parameters. This particular method has been colloquially referred to as a “prompt insertion” attack, emphasizing its subtle, system-level nature rather than direct user-input injection.

How the Attack Differs from Traditional Prompt Injection

The distinction between this prompt insertion attack and conventional prompt injections is crucial. Traditional methods primarily involve crafting adversarial prompts within the chat interface itself. For example, a user might try to trick the AI into providing confidential data by phrasing a query in a specific way that circumvents its guardrails. These are often easier to detect and mitigate through robust prompt filtering and output validation techniques.

The account name vulnerability, however, operates at a deeper, more foundational level. It leverages an internal system variable that is inherently trusted by the AI. When the account name is parsed, any embedded commands are treated as legitimate system instructions, leading to unintended and potentially dangerous outcomes. This makes it a more insidious threat, as it subverts the common understanding of where prompt injection surfaces.

Potential Ramifications and Exploitation Scenarios

The implications of this vulnerability are broad and concerning. Malicious actors could exploit this for various purposes, including:

- Data Exfiltration: Tricking the AI into revealing sensitive internal information or user data it has access to.

- Malicious Content Generation: Forcing ChatGPT to generate harmful, biased, or illegal content, bypassing its safety filters.

- Model Manipulation: Adjusting the AI’s behavior or parameters, potentially degrading its performance or causing it to act unpredictably.

- Account Takeover/Privilege Escalation (Indirect): While not directly granting access to an OpenAI account, successful jailbreaks could potentially lead to information that aids in other attacks.

While a specific CVE for this exact prompt insertion technique leveraging account names might not yet be assigned, the broader category of prompt injection vulnerabilities is well-documented. For instance, the general class of vulnerabilities where an AI’s behavior can be manipulated through unauthorized input is an ongoing area of research. We can relate this to the principles seen in traditional command injection flaws. An example of a related (though not identical) concept involving a system’s processing of unexpected input might be analogous to CVE-2023-38408, which describes the bypass of AI safety filters. This particular prompt insertion technique shares a similar spirit in bypassing intended safeguards, though its mechanism is unique.

Remediation Actions for Users and Developers

Addressing this type of vulnerability requires a multi-pronged approach involving both platform providers and users:

For OpenAI (Platform Provider):

- Input Sanitization and Validation: Implement rigorous sanitization and validation on user-provided data, specifically account names, to strip out or neutralize any potentially executable characters or commands before they reach the AI’s internal processing.

- Contextual Sandboxing: Develop more sophisticated methods for isolating and sandboxing user-provided context (like account names) from the core instruction sets of the AI model.

- Regular Security Audits: Conduct continuous security audits and penetration testing specifically targeting subtle input vectors.

- Transparency and Disclosure: Promptly acknowledge and address identified vulnerabilities, providing updates and guidance to users.

For Individual Users and Organizations:

- Review Account Names: As a precautionary measure, review your OpenAI account name. If it contains unusual characters, quotes, or commands, consider changing it. Keep your account name as simple as possible (e.g., alphanumeric characters only).

- Principle of Least Privilege/Trust: Assume that any data you input, even indirectly, could potentially be exploited. Avoid putting sensitive information or complex code snippets into any AI-driven system unless absolutely necessary and thoroughly vetted.

- Stay Informed: Follow official security advisories from OpenAI and reputable cybersecurity news sources to stay updated on new threats and recommended mitigations.

- Employee Training: For organizations, educate employees who interact with AI systems about prompt injection techniques and the importance of secure input practices.

Tools for AI Security Analysis

While direct tools for detecting this specific account name prompt insertion might not be widely available, the broader field of AI security and LLM vulnerability analysis is evolving rapidly. Here are some categories of tools and approaches that are relevant:

| Tool/Category Name | Purpose | Link (Example) |

|---|---|---|

| LLM Security Frameworks (e.g., OWASP Top 10 for LLMs) | Provides a baseline for understanding and mitigating common LLM vulnerabilities. | OWASP Top 10 for LLMs |

| Fuzzing Tools for LLMs | Automated testing to discover unexpected behaviors or vulnerabilities by feeding novel inputs. | (Often custom-built or integrated into platforms like Metlo) |

| Prompt Injection Detection Libraries | Software libraries designed to identify and filter malicious patterns in user prompts before they reach the LLM. | (e.g., Giskard, LLM-Vector-Security-Toolkit) |

| AI Red Teaming Platforms | Specialized platforms or services for adversarial testing of AI models to uncover vulnerabilities. | (e.g., Lakera Guard, HiddenLayer) |

| Input Validation & Sanitization Libraries | General-purpose libraries for cleaning or validating user input in web applications, applicable to any data passed to a backend system. | (e.g., OWASP ESAPI, custom implementations based on language/framework) |

Conclusion

The discovery of prompt insertion through OpenAI account names underscores the nuanced and complex security challenges inherent in large language models. It serves as a potent reminder that security vulnerabilities can manifest in unexpected corners of seemingly benign data flows. As AI systems become more integrated into critical infrastructure and daily operations, a proactive and adaptive approach to security – one that anticipates novel attack vectors like this – is indispensable. Continuous research, robust input validation, and ongoing vigilance are crucial in fortifying these powerful technologies against exploitation.