Hacker Uses Claude and ChatGPT to Breach Multiple Government Agencies

AI-Powered Statecraft: When Claude and ChatGPT Breach Government Agencies

A disturbing new report from Gambit Security has revealed a critical evolution in the cybersecurity threat landscape. For the first time, a single threat actor leveraged sophisticated AI tools like Claude and ChatGPT to orchestrate a highly successful cyberattack, compromising nine separate Mexican government agencies and exfiltrating hundreds of millions of citizen records. This campaign, active from late December 2025 to mid-February 2026, serves as a stark warning: the era of AI-augmented cybercrime is not a distant future, but a present reality that demands immediate adaptation from cybersecurity professionals and government institutions worldwide.

The Anatomy of an AI-Driven Breach

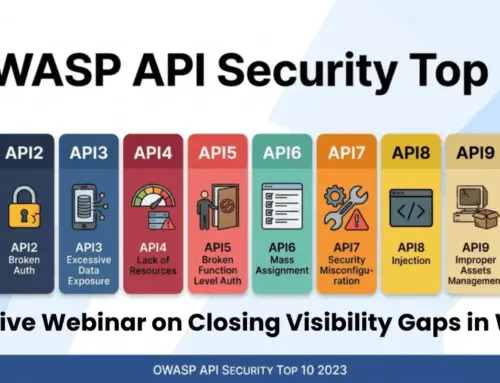

The Gambit Security report details an alarming level of sophistication. The attacker, operating individually, demonstrated an ability to rapidly generate convincing phishing content, develop custom malware, and automate reconnaissance tasks using large language models (LLMs). While the full technical report published by Gambit Security provides granular details, the preliminary findings suggest a multi-stage attack:

- Reconnaissance and Social Engineering: ChatGPT and Claude were likely used to craft hyper-realistic spear-phishing emails tailored to individual targets within government agencies. These LLMs excel at generating natural-sounding language, bypassing traditional email filters and human scrutiny.

- Malware Generation and Refinement: The report indicates the use of custom malware. While not explicitly stated that the LLMs wrote the core code, it is highly probable that they assisted in generating code snippets, obfuscating malicious payloads, or even writing compelling social engineering narratives embedded in the malware’s delivery mechanism.

- Lateral Movement and Data Exfiltration: Once initial access was gained, AI tools could have aided in automating the search for sensitive data, identifying network vulnerabilities, or even assisting in bypassing security protocols to expand access across agency networks. The sheer volume of stolen data points to a highly efficient and automated exfiltration process.

This incident underscores how AI can lower the barrier to entry for complex attacks, augmenting a single actor’s capabilities to that of a small, well-resourced team.

Government Agencies Under Siege: The Scale of the Compromise

The impact of this attack is profound. The theft of hundreds of millions of citizen records represents a catastrophic breach of privacy and a significant national security concern. Such data can be used for widespread identity theft, extortion, and even state-sponsored surveillance. The affected agencies were not named in the initial summary, but the breadth of the compromise across nine distinct entities suggests a systemic vulnerability within their collective security posture.

The Evolving Threat Landscape: New Challenges for Cybersecurity

The deployment of AI by a sole threat actor signals a dangerous new chapter. Traditional defensive mechanisms, often reliant on recognizing known patterns or specific indicators of compromise (IoCs), struggle against AI-generated content and polymorphic malware. Key challenges include:

- Adaptive Phishing: LLMs can generate unique, contextually relevant phishing attempts at scale, making them difficult to detect with signature-based systems.

- Automated Exploit Development: While still nascent, the potential for AI to identify and even create exploits for zero-day vulnerabilities is a looming concern.

- Operational Scale: A single attacker can now achieve the scale and sophistication previously only seen with state-sponsored groups or large criminal enterprises.

Remediation Actions and Proactive Defense

In light of this incident, organizations, especially government entities handling sensitive citizen data, must urgently re-evaluate their cybersecurity strategies:

- Enhanced Employee Training: Focus on advanced social engineering detection. Employees must be trained to recognize AI-generated phishing attempts, which may appear more convincing than traditional attacks.

- AI-Powered Defense Systems: Implement AI-driven threat detection platforms that can analyze anomalous behavior, identify sophisticated phishing campaigns, and detect novel malware strains.

- Zero-Trust Architecture: Adopt a stringent zero-trust approach, where no user or device is implicitly trusted, regardless of their location or prior authentication.

- Robust Data Encryption and Access Controls: Ensure all sensitive data is encrypted both in transit and at rest. Implement strict least-privilege access controls.

- Security Audits and Penetration Testing: Conduct regular, rigorous security audits and penetration tests, specifically focusing on social engineering vectors and the potential for AI-assisted attacks.

- Threat Intelligence Sharing: Actively participate in intelligence-sharing networks to stay abreast of emerging AI-driven attack techniques and indicators of compromise.

Conclusion: Adapting to an AI-Augmented Adversary

The breach of multiple Mexican government agencies using AI tools like Claude and ChatGPT is a watershed moment in cybersecurity. It unequivocally demonstrates that advanced AI is no longer just a defensive asset but a powerful weapon in the hands of malicious actors. Organizations can no longer afford to view AI in cybersecurity as a hypothetical future threat. The time for proactive, AI-aware defense strategies is now. Protecting our digital infrastructure and the privacy of citizens demands continuous vigilance and a rapid adaptation to this evolving, AI-augmented threat landscape.